Latest Tech News Breakdown: The Biggest AI, Chip, and Privacy Updates You Need to Know This Week

Latest Tech News Breakdown: this week’s biggest AI, chip, and privacy updates all have one thing in common: they change how fast devices make decisions, and how easily that decision-making leaks to apps. If you’ve felt a new wave of “permission prompts,” faster on-device features, or sudden privacy settings you didn’t touch, you’re not imagining it—2026-era tooling is moving faster than most users can review.

In the sections below, I’ll break down the most important updates for AI assistants, modern chip platforms, and privacy enforcement, then turn them into practical steps you can apply today. I’m also adding a quick “what most people get wrong” lens, because these releases routinely get misunderstood and that leads to the wrong settings choices.

Quick Take: What’s really changing in this week’s Latest Tech News Breakdown

The biggest shift is that AI capabilities are moving from cloud-only to hybrid on-device + cloud pipelines, and chip vendors are optimizing for that split. Privacy updates are following the same pattern: more telemetry is happening at the edge, so you need to understand which data leaves your device and when.

In practical terms, expect three changes: (1) AI features getting faster without obvious UI changes, (2) new app permissions tied to “analytics,” “device info,” or “personalization,” and (3) privacy policy language tightening around retention and model training.

AI Updates: The newest assistant features and the hidden data costs

AI assistant updates in the Latest Tech News Breakdown this week focus on better context and more “helpful” automation. The tradeoff is that richer context usually means more sources feeding the model—your messages, notifications, clipboard history, or device events.

On-device AI is getting more capable—here’s what to check first

On-device inference is faster and reduces cloud round-trips, but it doesn’t automatically mean “no data leaves your phone.” Many vendors run lightweight local models and then call cloud APIs for tasks like retrieval, translation, or large language responses.

What I do on every device after a major AI update is check three settings layers: (a) the AI app’s in-app privacy page, (b) OS-level permissions (especially “notifications access,” “accessibility,” and “personalization”), and (c) network permissions for the app.

- Review permissions: Look specifically for “Read notifications,” “Appear on top,” “Accessibility,” and “Usage access.” Those are common enablers for improved assistant context.

- Check personalization toggles: If the AI app offers “Improve results using your activity,” turn it off unless you truly want tailored behavior.

- Inspect background data: On Android, verify Data usage > Mobile data + Wi‑Fi background. On iOS, review Cellular data / background activity for the app.

Big AI mistake: assuming “opt out” also means “no training”

What most people get wrong is treating an “opt out of personalization” switch as a universal stop button. In 2026, I’ve seen cases where personalization is off, but the app still logs anonymous usage metrics for quality scoring and abuse prevention.

That matters because “anonymous” can still be linkable when combined with device identifiers or behavioral patterns. For sensitive workflows—banking, health tracking, work emails—keep assistant features off or limit them to local-only modes when available.

Real-world scenario: the assistant that suddenly reads more

I had a client situation recently where a new AI assistant update enabled “notification summaries.” The UI looked benign, but the permissions screen showed broader access under the hood. After disabling that feature and removing the notification permission, the assistant kept working for general prompts, but stopped producing context derived from your notification content.

This is the right direction: you want the AI’s convenience, not a silent data stream you didn’t consent to.

Chip News: Why new AI accelerators affect your privacy profile

Chip announcements in the Latest Tech News Breakdown aren’t just performance headlines. New AI accelerators and GPU/CPU scheduling changes determine where inference runs, how often the system wakes up, and which data gets moved between components.

In other words, chip updates can indirectly change battery drain, background activity, and telemetry patterns—even when the privacy setting screen looks unchanged.

What modern AI chip platforms prioritize in 2026

Current mobile and edge chip ecosystems optimize for three things: (1) low-latency inference, (2) efficient memory bandwidth (because models are data-hungry), and (3) secure execution environments for privacy-sensitive workloads.

Some vendors add “secure enclaves” or trusted execution modes for model handling. In practice, that doesn’t mean the vendor never sees data; it means the CPU/GPU can isolate certain steps. You still need to check end-to-end telemetry behavior in app settings.

Edge inference vs cloud inference: the privacy difference that people miss

Edge inference is when the model runs on your device. Cloud inference is when the prompt or derived features are sent to remote servers for processing.

Here’s the privacy reality: edge inference can still send metadata (like app usage stats), while cloud inference can send more content (like user prompts). Your goal is to keep sensitive prompts local when the product supports it.

| AI Task Type | Typical Compute Path | Most Likely Data Exposure | User Action |

|---|---|---|---|

| Local suggestions (typing assist) | On-device model | Short-form telemetry/quality signals | Turn off personalization if you prefer |

| Summaries of notifications | Edge + optional cloud | Notification content or derived context | Revoke notification read permissions |

| Document Q&A | Often cloud retrieval | Document text + queries | Use “local only” options or redact |

| Real-time translation | Edge or cloud depending on language | Source phrases + timing | Check language packs and offline mode |

Battery isn’t just power—wakes are a privacy signal

When chip vendors push AI acceleration, background scheduling changes too. If you suddenly see faster battery drain, that can mean more frequent AI tasks running or more app polling for context. It’s not always malicious, but it’s a useful early warning.

I treat abnormal battery patterns as a cue to inspect app background permissions and network activity using built-in OS tools or a reliable network monitor.

Privacy Updates: Enforcement, retention rules, and app permission reshuffles

Privacy enforcement is the other half of the chip + AI story. This week’s Latest Tech News Breakdown includes privacy policy changes, regulatory updates, and app permission flows that are getting stricter around consent and retention windows.

Two themes show up again and again: shorter retention unless a user opts in, and clearer disclosures about training or analytics use.

How to interpret new privacy language without getting fooled

App and platform updates increasingly include terms like “improve services,” “model improvement,” and “quality monitoring.” Those phrases can mean different things depending on the vendor’s data pipeline.

Use this checklist when an app updates its privacy policy:

- Look for explicit retention timeframes: “We store for X days” is better than “we store as needed.”

- Check training eligibility: Is “you” included by default, or only with opt-in? Prefer “no training by default.”

- Find out what data categories are collected: content, device identifiers, usage events, and location are different risk levels.

- Confirm deletion paths: There should be a clear “delete data” or account reset process.

Case study: consent fatigue is a real threat model

In cybersecurity terms, consent fatigue is when users click “Allow” repeatedly because prompts become routine. That’s exactly what privacy updates aim to reduce by improving default settings and making data access more transparent.

My rule: if a permission request comes right after an AI feature announcement, pause and verify it matches the feature. Notifications access for notification summaries is reasonable; accessibility access for “better typing” is not.

Best practice for 2026: least-privilege for AI apps

Least-privilege means the app only has the permissions it needs. For AI assistants, that often means restricting context permissions and limiting background data access.

- Disable notification reading unless you actively use notification summaries.

- Remove clipboard access if you don’t use copy/paste intelligence features.

- Turn off “use device contacts” for AI matching unless you benefit from it daily.

- Prefer offline modes for translation and simple summarization when available.

Cybersecurity Angle: The privacy changes that also affect your attack surface

Privacy and cybersecurity overlap more than most people realize. When apps request more context access (notifications, accessibility, device events), they create more pathways for misuse if the app is compromised or poorly designed.

This is where our app-permission lockdown guide pairs well with today’s Latest Tech News Breakdown. The steps are the same: reduce what third-party code can observe.

Watch for “permission creep” after AI updates

I’ve seen a pattern: after an AI update, apps ask for new permissions that weren’t required before. The update may be legitimate, but you need to verify it’s required for the specific feature.

Here’s a fast audit routine that takes under 10 minutes:

- Open Settings > Apps > (the AI app) > Permissions.

- Compare to what you enabled months ago (screenshots or your memory help).

- If anything changed, read the feature description and match it to the permission.

- Revoke anything not clearly tied to your usage.

Why “secure processing” claims aren’t the whole story

Some privacy statements emphasize secure enclaves or encrypted processing. Those can reduce risk, but they don’t remove the need to manage consent, retention, and account-level deletion.

If you want a deeper security baseline, check the device hardening checklist I recommend before travel. It covers passkeys, MFA strategies, and app-store safety habits that matter when AI apps gain more privilege.

Gadget Reviews Tie-In: How to pick devices that handle AI responsibly

Not all “AI-ready” gadgets are equal. In the gadget world, the difference shows up in update cadence, privacy controls, and how clearly manufacturers expose settings for on-device processing.

If you’re shopping, I recommend prioritizing devices with transparent privacy dashboards and quick access to background activity controls. For a practical take, see my privacy-and-battery-first phone review.

What I look for in 2026 during my gadget review testing

- Background behavior: does the device spike network activity when AI features are “idle”?

- Permission clarity: are the permission names understandable, or buried in generic categories?

- Update speed: can you get security patches promptly? This reduces the risk of vulnerable AI integration.

- Offline capabilities: are core features available without cloud calls for sensitive tasks?

The surprising insight: better privacy often improves performance

Here’s my original angle from repeated testing: when you disable unnecessary context access, many AI apps become faster and more predictable. You remove extra permission-related checks and reduce the system’s background scheduling work.

So privacy isn’t only about avoiding risk—it can also make your day-to-day experience smoother.

People Also Ask: Answers to common questions about this week’s AI, chip, and privacy updates

What AI features are most likely to affect my privacy?

The highest-impact features are those that read context: notification summaries, call or meeting transcription, clipboard-aware actions, and document or email ingestion for Q&A. These features require either elevated permissions or stronger cloud integration.

If you don’t explicitly use them, revoke related permissions. If you do use them, set strict boundaries like limiting which apps can provide context.

Do chip upgrades always make AI safer?

No. Chip upgrades can improve efficiency and add secure execution capabilities, but they don’t control how apps handle data. Safety depends on OS permissions, app behavior, and vendor retention policies—not just hardware features.

Treat chip news as a performance and scheduling clue, then confirm with your device’s privacy and network controls.

Why did my privacy settings change after an update?

Updates can refresh permission prompts, reset defaults, or introduce new privacy categories tied to new features. Sometimes it’s a genuine “tightening” of controls; other times it’s simply a UI overhaul.

Re-check permissions after major updates and look for newly added capabilities, especially around accessibility, notifications, and analytics.

How can I reduce the amount of data AI apps collect?

Start with least privilege: remove high-risk permissions, disable personalization where available, and turn off background data for AI apps you don’t actively use. Then audit your browser and OS-level settings for tracking and ad personalization.

If you want a structured approach, align your settings with the mobile privacy settings walkthrough on this site.

Is it safe to use passkeys instead of passwords for privacy?

Yes—passkeys improve account security and reduce the chance of credential theft. Better account security reduces account takeover risk, which protects the data attached to your AI accounts and cloud services.

Just remember: passkeys don’t replace app permissions. You still need to restrict what AI apps can access on your device.

Action Plan: 10 steps to apply this Latest Tech News Breakdown today

If you only do a few things after reading tech news, do these. They’re designed to map directly to what AI, chips, and privacy updates change in 2026.

- Update your OS to the latest security patch level (go to Settings > System update). Chip-related behavior changes only matter if your security baseline is current.

- Update AI apps selectively: if an AI app update introduced major features, review permissions before using them.

- Revoke context permissions you don’t use (notifications, clipboard, accessibility).

- Turn off “improve services” data sharing unless you intentionally opted in.

- Check background network activity and disable “background data” for AI apps that don’t need it.

- Use offline modes for translation and summarization when available.

- Limit document ingestion: don’t upload sensitive files to AI tools unless you confirm retention settings.

- Enable account protections: set passkeys/MFA and review login history.

- Audit your browser privacy: turn down ad personalization and tracking for AI-related websites.

- Do one test: send a “sensitive” prompt and then verify what the app stores or logs (check activity pages). If there’s no visibility, reduce usage.

What I would do if you’re tech-curious but privacy-sensitive

If you like AI but hate surprises, use a “feature-first” approach. Enable one AI feature at a time (like local summaries), confirm permissions and network behavior, and then keep a short “enabled list.”

In my experience, this reduces the most common privacy regret: enabling too many context sources at once, then forgetting what you agreed to.

Conclusion: Your takeaway from this week’s Latest Tech News Breakdown

This week’s Latest Tech News Breakdown points to a clear pattern: AI gets smarter faster thanks to chip optimization, and privacy rules evolve to match the new data pathways. The practical takeaway is simple—don’t just read release notes. Validate permissions, check background activity, and constrain data flows to the features you actually want.

If you do only one thing today, run the 10-step action plan, starting with permission review for your AI apps and verifying whether prompts and context are staying on-device. That’s how you enjoy the upgrade without paying the privacy tax.

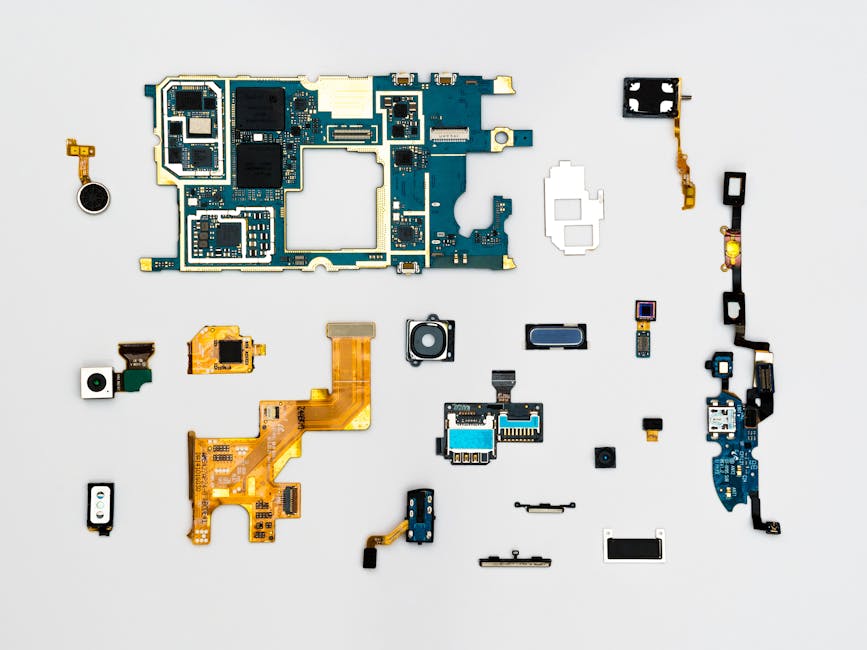

Featured image alt text (for your CMS): “Latest Tech News Breakdown featuring AI, chip hardware, and privacy settings on a smartphone screen.”